A while back I was asked by the people from Electric Cloud if I would be interested in participating in a panel to talk about Orchestrating Enterprise

Software Development as a part of their Continuous Discussions series.

I thought it was a very cool idea and of course said yes. The questions we talked about where things like

- What does your test matrix look like?

- How do you define the pathway through your test cycle?

- How do you manage test data and environments?

- How have testing needs changed overtime?

We had quite a nice blend of people from the IT industry with different backgrounds so it was very interesting to hear others peoples stories, views and opinions! Have a look at the discussion right here:

Also see the blogpost of Electric Cloud here and the whole series here

Thanks Sam, Anders, Gilad and Avigail for having me!

For the people who can’t or don’t want to watch the video….here is a transcript of my contribution:

What does your test matrix look like?

Right now I’m currently building a lot of services for a customer, back-end server-side. We do have a front-end but there is another team doing that. We have one large dependency with one of the main systems of the customer and we don’t have a lot of influence there. Those guys just run their own tests, do their own patches, they don’t care about other things. Because it’s the most important part of the company, so there we just have to make sure we keep in touch to know when they are going to patch and make good agreements with them. How do they do it, when they do it and what are the changes. But while some are well documented, for some we don’t even know what the changes are going to be. Suddenly all our unit tests break and then we see they patched something last night. We tried to come to an agreement with them, to let us know on time, but in our experience it’s pretty hard. It’s a team located somewhere else and they have their own deadlines. We have some servers that don’t depend on them, but most of our business requires that system.

We’re trying to change, last year they had four big releases, and now they want to do it more often. This year I think they are aiming at doing a big patch every month. We’re trying to work towards it, but sometimes it’s difficult, we’ll see this year how it works out because like I said, there are a lot of outer programs depending on it. The more often you’re going to update it, the more often you’re going to impact other teams or pieces of software.

We update our system quite regularly, because nobody depends on us except the front-end, so basically we have a sprint every two weeks, and we’re trying to deliver every two weeks until acceptance. So we still do dev-test acceptance and test until there, but to go live with an update, it needs to be planned a little bit more and we can’t do that every two weeks.

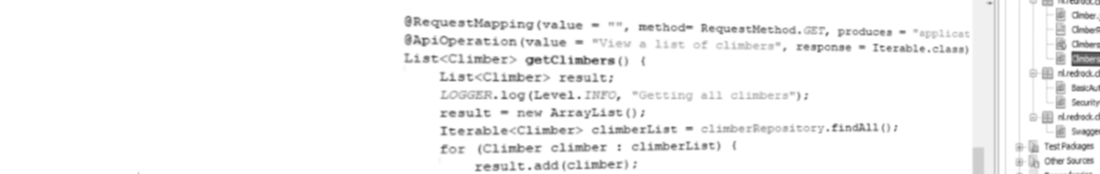

How do you define the pathway through your test cycle?

We kind of struggled in the beginning, because coming from the Java background, I wanted to have a unit test which we could run with our Jenkins server, and test all of our services nightly. And we have a specific test crew within our team which should do integration system tests for us. In the beginning, we did the wrong tests in the wrong subsets. We tested too much in the unit tests. We did an integration test and a system test in there, we really needed to take a good look that our unit tests didn’t take too long. It was one of the things we wanted to know, and a unit test shouldn’t run for more than 20 minutes or so, it should be fast. So we kind of had to cut down, and move some tests from the unit test into the system tests, and that kind of worked for us. So we have them both run at night, first we have our unit test and later on we have a complete deployment to our test environment and then we have system integration tests running there. We still sometimes have to distinguish between what are the core tests and where does it fit in. We have to separate it by service, so the services we build are autonomous, and they’re tested autonomously as well. So we really consider each service as one object so if we changed it, that gets tested and not the whole set.

We have two teams right now, and they’re both work on the same services or set of services we have now, I think we have now about 18 services with different operations on them so we extend them or change them. Both of the teams are responsible for them. We have a programmer that said I would like to have this and that functionality build, so if it touches a lot of services that we got to do. We had to really make sure we don’t fit too much in there. Cause sometimes that’s what the downfalls we have, two weeks is sometimes really short for fitting too much functionality.

How Do You Manage Test Environments And Data?

Like I said before, that still one of the problems here and there with us. We have some services we build that don’t rely specific API for example of the big system. We have our own Database underneath that so we’re in control. We usually have no problem over there. The big important systems we haven’t virtualized that in any way or we haven’t mocked that, so we’re dependent here and there. Just before they say “Well tomorrow we’re going a patch for eight hours”, we’re going to have to deal with that. We’re looking at a way to be able to run without it, is there any way it virtualize this? Maybe yeah, with Chef, or Puppet or get snapshot of the system and put something in the air. We don’t yet, we have to see that next year, it’s really a quite complicated system with a large database underneath so it’s not as easy to make a simple snapshot of it.

We’re not testing in production, definitely not. We have two test environments, one with a simulation chain, and one for release chain. Same for the acceptance test. We also have a dedicated **PLS** Performance Load Stress-less Environment. But also for that, there’s only one we have, which is getting used by a lot of other programs and teams within the company. So also over there we have to go into discussion with them who’s using the test environment, cause the CPU usage is quite high so we have to maybe wait for a couple of hours and see who is using it and then we can run our tests. Also over there, there is a room for improvement, maybe we can virtualize that or make sure we can run a **PLS** whenever we want to and not be dependent on any other teams within our company. This is also something we want to have a look at, it’s just static, we can’t use any tool like Docker, and we need an extra environment.

How Have Testing Needs Changed Over Time?

We came to the conclusion that we want to be more thorough. In the beginning, we had some key stuff that we really wanted to be sure about it that it was running. And we put our stuff into production and we found out that here and there we got some exotic test situations when we founds bugs. Like Oh my god, nobody, maybe somebody within the company, knew that one rare exception with one customer, and we got the one customer right over here. How do we make sure that we don’t hit any speed bumps like that anymore. We say together and asked how can we filter out these exceptions. How can we talk within the company who knows all these possible exceptions and make tests for them. So one of the things is that we wanted to be more thorough so our quality will be higher. One of the things that we started off at the beginning and we added more value to that was performance and load tests. Because in the beginning we just wanted to be sure the first thing run, and later on we said, well, the company that I work for now is health insurance, so mostly at the end of the year. During the year there’s not much movement in that, but during the end of the year, everybody is going to make changes to the health insurance, so they are going to have a load at the end and the beginning of the year. So we wanted to make sure we can handle the load. In the beginning of the year we went live and said “We’re OK for now, but we’re going to have to prepare for the peak that’s coming at the end”. So we added more attention to how we run our performance and load tests for every service, every end of the week we try to run it. If we’ve made changes, we can imminently see it at the end of week, we can see we changed something that impact performance. If you do it at the end you’re probably going to be too late, or you’re going to say “OK, we have performance issue, but where is it?”. Then you have to dig into the code, where could it be? Is it in the database, I don’t know.